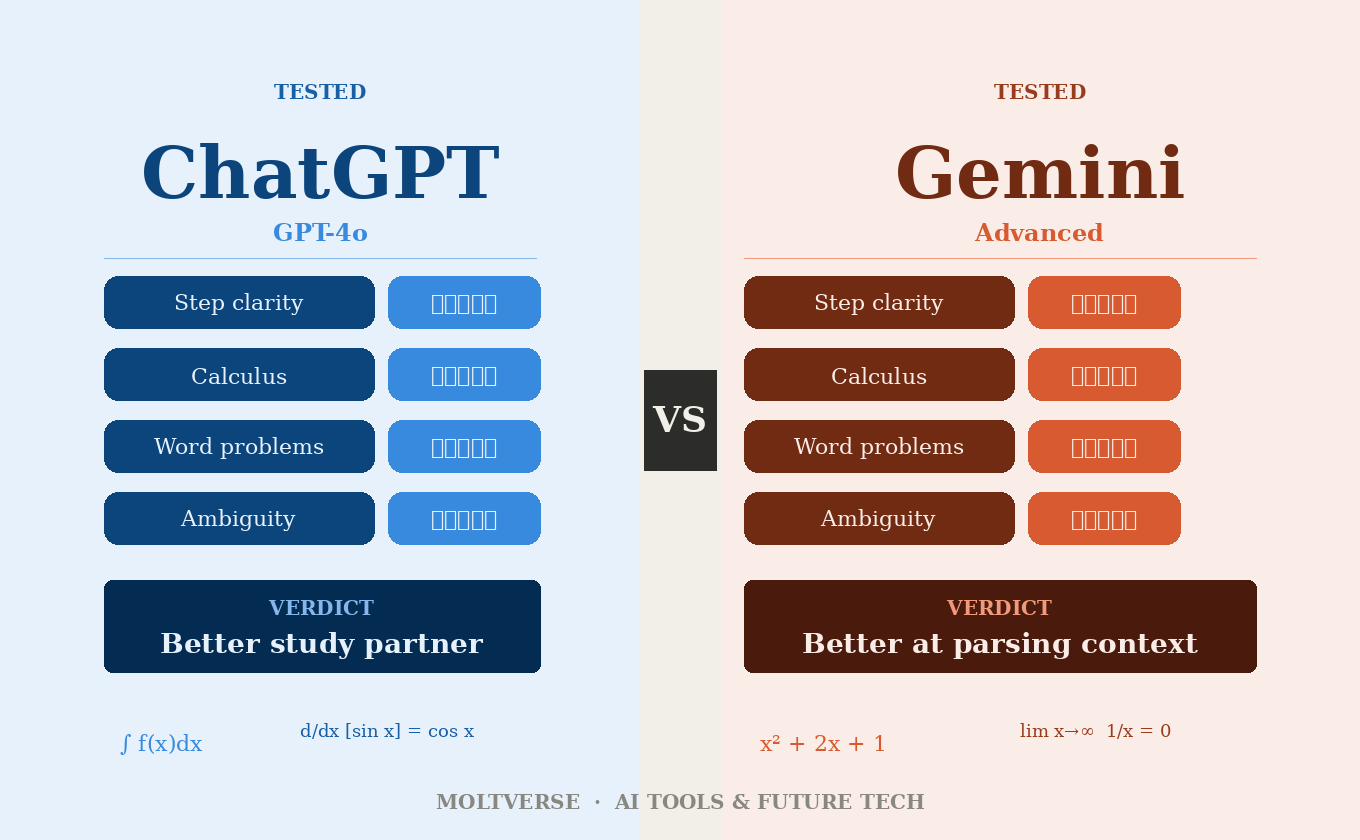

- ChatGPT (GPT-4o) is more consistent on multi-step problems and shows cleaner working

- Gemini Advanced handles numerical reasoning well but occasionally hallucinates steps in complex proofs

- Neither tool should be your final answer for high-stakes math, but one of them is a better study partner

- The winner depends heavily on what kind of math you’re doing

I was helping my nephew prep for his engineering entrance exam last summer, and I had a genuinely embarrassing moment. I used an AI tool to verify a calculus answer, confidently showed him the result, and then his textbook said something completely different. Turns out the AI had confidently, fluently, absolutely wrongly worked through integration by parts.

That incident sent me down a rabbit hole. Which AI is actually reliable for math, specifically for math problems where being “mostly right” is the same as being wrong?

So I ran a proper comparison: Gemini vs ChatGPT for math problems, across arithmetic, algebra, geometry, calculus, and word problems. Not cherry-picked examples. Not vibes. Actual tests.

Here’s what I found:

Why This Comparison Actually Matters

Most articles comparing Gemini and ChatGPT talk about writing, coding, or general knowledge. Math gets a footnote. That’s a problem, because math is where AI errors are measurable. You can’t argue with a wrong integral. You can argue endlessly about whether an essay is good.

Math also exposes something that gets glossed over in AI reviews: the difference between appearing confident and being correct. Both tools write math explanations in beautifully formatted, authoritative-sounding prose. Which means when they’re wrong, they’re convincingly wrong.

Think of it like hiring a new employee who presents everything in immaculate slide decks. Looks great. But if the numbers on the slides don’t add up, the polish is actually working against you.

Read Also: Best Free AI Agents 2026: I Tested 5 for a Week and One Genuinely Surprised Me

How I Set Up the Tests

I used ChatGPT with GPT-4o (the default for Plus subscribers) and Gemini Advanced (powered by Gemini 1.5 Pro at the time of testing). I ran 20 problems across five categories:

- Basic arithmetic and fractions

- Algebra (linear and quadratic)

- Geometry (proofs and area/volume)

- Calculus (derivatives, integrals, limits)

- Word problems with multiple variables

Each problem was entered fresh, no prior context. I verified answers manually and against Wolfram Alpha. I’m sharing the categories where the results were genuinely interesting, not just a boring scorecard.

Basic Arithmetic and Algebra: Closer Than You’d Think

Honestly, I expected a clear winner here. There wasn’t one.

Both tools handled straightforward arithmetic and one-variable algebra without issues. Where it got interesting was in problems with fractions, negative exponents, or multi-step setups where you have to track several rules simultaneously.

ChatGPT showed its work more cleanly. When I gave it a messy algebra problem involving rational expressions, it laid out each step with brief explanations, almost like a textbook solution. Gemini gave me the right answer too, but sometimes jumped steps in a way that would confuse anyone actually trying to learn from it.

That matters more than it sounds. If you’re a student trying to understand why the answer is what it is, step-skipping is a pedagogical failure, even if the final number is correct.

Fair warning though: both tools occasionally made sign errors in longer problems. Small mistakes, but the kind that cascade.

Read Also: How to Use Claude AI for Content Writing (Complete Guide 2026)

Geometry and Word Problems: Gemini Surprised Me

Here’s the thing. I went in expecting ChatGPT to dominate across the board, because it’s been around longer and has a huge amount of math training data. But Gemini actually held its own in geometry and particularly in word problems.

Gemini seemed better at parsing the narrative structure of word problems, pulling out relevant variables, and setting up the equations correctly. ChatGPT occasionally latched onto irrelevant numbers in a problem or misread what was being asked for.

One specific example: I gave both a classic “two trains leaving stations” problem with a twist (one train made a stop). Gemini correctly identified the stop as a variable affecting total time. ChatGPT ignored it on first pass and got the wrong answer, then self-corrected when I pointed out the error. Which… is fine? But Gemini just got it right.

I’ll be straight with you: I did not see that coming.

Calculus: Where Things Get Genuinely Messy

This is where the gap opened up, and it’s also where I think most reviews of these tools completely miss the point.

The popular assumption is that AI tools are “pretty good at calculus now.” That’s not really true. What they’re good at is common calculus: standard derivatives, basic integrals with recognizable forms, limits that follow textbook patterns. Push them past that, and both tools start to show strain.

What does that mean in practice? For integration by substitution or chain rule derivatives, both tools performed well. But when I threw in an integral that required integration by parts followed by a trigonometric substitution, both tools made errors, they just made different ones.

ChatGPT’s errors tended to be procedural: it would apply the right technique but make an arithmetic slip midway. Gemini’s errors were more structural: it occasionally chose the wrong technique entirely and then executed it confidently.

Which is worse? I’d argue Gemini’s structural errors are harder to catch if you don’t already know the material. A wrong technique that looks right is dangerous. A right technique with a sign error is at least fixable.

Does that mean ChatGPT wins on calculus? For most users doing most things, yes. But neither tool should be your sole verification method for anything above first-year calculus.

Read Also: How to Create Your AI Avatar and Clone on YouTube: Step by Step Guide

What Everyone Gets Wrong About AI and Math

Most comparison articles test AI math skills with clean problems: perfectly formatted, no ambiguity, one correct answer. Real-world math doesn’t look like that.

The test that actually matters is: can these tools handle a problem with ambiguous phrasing, missing information, or an unusual setup?

I tried a problem that was deliberately underspecified (a geometry problem missing one dimension). ChatGPT flagged the ambiguity and asked for clarification. Gemini assumed a value, stated its assumption, and solved from there.

Neither response is “wrong,” but they’re different philosophies. ChatGPT’s approach is more cautious. Gemini’s is more practical, but only if you notice it made an assumption. If you’re skimming, you’ll miss it.

Most reviews never test this. They test clean problems. That gives you an incomplete picture of how these tools behave when things get messy, which is exactly when you need to know.

Head-to-Head Comparison Table

| Category | ChatGPT (GPT-4o) | Gemini Advanced |

|---|---|---|

| Basic Arithmetic | ✅ Excellent | ✅ Excellent |

| Algebra (multi-step) | ✅ Strong, clear steps | ✅ Strong, occasional step-skipping |

| Geometry | ✅ Good | ✅ Very good |

| Word Problems | ⚠️ Occasionally misreads | ✅ Better at parsing narrative |

| Calculus (standard) | ✅ Reliable | ✅ Reliable |

| Calculus (advanced) | ⚠️ Procedural errors | ⚠️ Structural errors |

| Showing Work Clearly | ✅ Better | ⚠️ Can skip steps |

| Handling Ambiguity | ✅ Asks for clarification | ⚠️ Assumes and proceeds |

Who Should Use Which Tool (And Who Shouldn’t Use Either)

If you’re a student learning math, ChatGPT is probably the better study partner because it shows its reasoning more explicitly. Watching how a problem is solved matters as much as the answer.

If you’re a professional doing quick numerical checks on familiar problem types, either tool is fine, but Gemini’s stronger word problem parsing makes it useful for applied math scenarios.

If you’re preparing for a competitive exam (engineering, finance, actuarial), use these tools for practice only. Never trust them as answer keys. Verify everything independently.

And if you’re a researcher or anyone doing original math, honestly, neither tool is built for you. Use Wolfram Alpha for computation, Mathematica for symbolic manipulation, and treat the AI assistants as brainstorming tools at best.

Which One Wins

Slightly frustratingly, it’s not a clean sweep.

ChatGPT edges out Gemini specifically because it shows its work more clearly and handles advanced calculus with more consistent technique, even when it makes errors. Transparency in errors is genuinely valuable. You can catch a wrong step. You can’t easily catch a wrong method if it’s presented confidently without explanation.

But Gemini is not far behind, and on word problems specifically, it’s actually better. If Google keeps improving it (and they clearly are), this comparison could look different in six months.

In my experience, the best approach is to use one as a primary and the other as a sanity check on anything important. Two imperfect tools triangulating are more reliable than one confident-sounding one.

What’s your experience been? Have you caught an AI making a math error that cost you time? Drop it in the comments, because I genuinely want to know how widespread this is.

Productivity-focused AI researcher and writer exploring how agents can transform daily workflows. With a background in cognitive science and 6 years evaluating LLMs & automation stacks, I help readers choose the right tools for coding, writing, research, and business ops. Living between London and Toronto, I test everything hands-on before recommending.